by Tom Gaylord, The Godfather of Airguns™

Writing as B.B. Pelletier

This report covers:

• Equipment to fill the gun

• Silicone chamber oil

• Diver’s silicone grease

• Plumber’s tape

• No such thing as Teflon tape

• A chronograph tells the whole story

• Other things?

• Summary

Today, I’m writing this for the sales representatives at Pyramyd AIR, who are always asked what else you’ll need when you buy a precharged airgun. Precharged airguns need some things to go with them to operate smoothly. Think of the batteries you always need for electronics. Are they included in the box or do you have to buy them extra?

Equipment to fill the gun

This is the big one! How does air get into your new gun? Back in the 1980s, customers were surprised to learn they had to buy the fill device (also called a decant device, hose and gauge, and other things) separate from the airgun. They never thought about people possibly owning two such guns that one fill device would service. And they also didn’t appreciate how much these fill devices cost — and how much could be saved by not buying a second one that was identical.

These days, most people know you need a fill device of some kind to connect an air source to an airgun, but there’s more to it than just that. Some companies, such as AirForce, Crosman, Daystate and Dennis Quackenbush, use the now-common Foster quick-disconnect fittings that simplify everything. One common fill hose services all the airguns made by these companies. On the other hand, Air Arms, BSA, Evanix and others still have proprietary connections. The question is: What do you need to fill your new airgun?

Pyramyd Air provides an easy solution to this dilemma — a little decision tree that helps you find exactly what you need for your new airgun. You can try it out right now and see how it works. Select any PCP on their website and look at the product page. I’m going to choose the Air Arms S510 Xtra PCP Carbine.

On that page, find the tabs where you see these words:

Description Specifications Customer reviews Questions & Answers PCP Hookup

Click on the words PCP Hookup, and you’ll see the tool they’ve provided. Don’t be embarrassed if this is new to you. I didn’t notice it until Edith came into my office and walked me through it — and I write this blog!

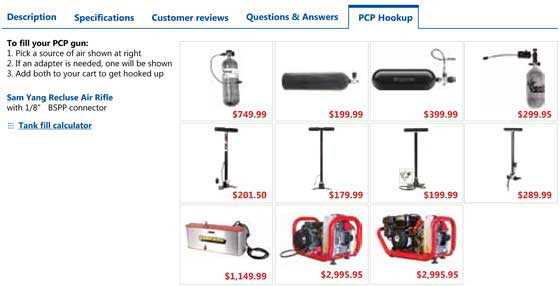

When you click on PCP Hookup, this is what you’ll see.

Now, click on the air source you will be using to fill your PCP, and the complete connection requirements will come up. Try several of these fill source options (by clicking the reset button), so you can fully appreciate what they’ve done for you. As the fill source changes, so do the connection requirements. If no additional adapters or hoses are shown after you click on your preferred fill device, that means none are needed. And it states that at the top of the left side.

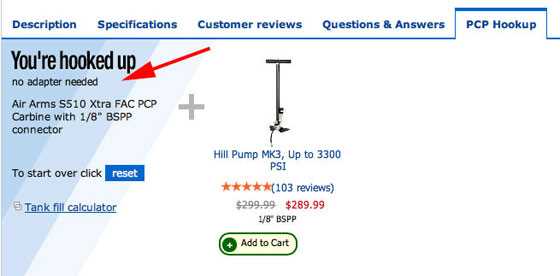

When I clicked on the Hill MK3 pump as my preferred fill device, it said on the top left column that I didn’t need any additional hoses or adapters to make this fit the Air Arms S510 Xtra FAC PCP air rifle. If I wanted to find other fill devices, I would click the “reset” button to go back to the full list on the PCP Hookup tab.

Like Einstein’s relativity equation, everything sounds simple after seeing this software tool. But airgunners have lived 34 years without it and can tell you — it isn’t obvious!

[Editor’s note: Whether you’re looking for hand pumps or carbon fiber tanks, always check out more than one fill option. Some devices are more expensive, but they may already include the hoses and adapters you need and may end up being more economical than buying a fill device that requires you to buy additional hoses and adapters.]

Silicone chamber oil

If you’ve read even a couple weeks worth of these reports, you’ve seen me recommend silicone chamber oil for sealing airguns. This stuff is so necessary that veteran airgunners should all know they need it. When I worked at AirForce Airguns, I was responsible for testing every valve they made. When a valve leaked (and a small percentage of them did leak on the first test) it was my job to fix it. There are just two things that can fix most high pressure air valves — getting rid of dirt and silicone chamber oil.

I would use a heavy rubber mallet to smack the valves, causing them to pop open loudly under pressure. That also blew out any dirt that was in the sealing surfaces and also made a perfect impression of the metal valve face in the hard synthetic valve seat. It was like breaking in leather shoes. Once broken-in that way, that valve would work reliably for — well, I don’t really know. I have some valves that are now 14 years old, and they still hold indefinitely.

But silicone oil was needed for the o-ring that seals the valve inside the air tank. We actually used a light industrial silicone grease that works very well when you can apply it directly to the parts; but when the gun is together, it’s hard to get grease to go where you want. Light silicone oil will go everywhere, and I cannot remember how many hundreds of airguns I’ve fixed with it — the most recent being the Crosman 2240 that has the HiPAC air conversion installed.

I’ll even go farther and advise you to get a bottle of silicone chamber oil with a needle applicator. That applicator is very handy for putting the oil exactly where you want it. You’ll also find it wonderful for oiling piston seals on a spring gun through the air transfer port.

Diver’s silicone grease

I just said that silicone grease and oil could be used interchangeably, but what I didn’t say is that you pick the one that best suits the job. That’s why I also have silicone grease on hand at all times. If there’s an o-ring that can be seen, like on the bottom of 200- and 300-bar air fittings, use the grease instead of the oil. For the o-ring that seals the HiPAC air tank to the Crosman 2240 pistol, use the grease. Not only does the silicone grease seal air just like silicone oil, it also remains on the parts for a long time. I have 3 jars of it, and one is always in my range bag.

Plumber’s tape

Here’s a product that Pyramyd AIR doesn’t carry! But no worries, because just about every hardware store stocks it. Plumber’s tape is for sealing joints that thread together.

Plumber’s tape is not sticky. It seals the smallest holes in threaded joints.

If this is new to you, it’s tape that doesn’t stick to anything. It has no glue! It is elastic and rather thin, but when you wrap threads with it the correct way, it expands into the smallest crevices and seals the threads against air loss. And, having written that, I guess I will now do a report on how to properly wrap threads for a repair.

Plumber’s tape lubricates the threads, so joints go together tighter (farther), plus it deforms easily, blocking those same threads. It also keeps threads from seizing, so they come apart easier.

No such thing as Teflon tape

Many people call this Teflon tape. But it isn’t. Teflon is a registered trademark of Dupont; so, unless they make the tape (they don’t at the present time), it isn’t Teflon. It’s more correctly called PTFE (polytetrafluoroethylene) tape — but plumber’s tape is probably the best name for it.

It’s not expensive, and I use it a lot; so, I keep several rolls around the house. I also keep a roll in my range bag, where it’s saved a PCP field test more than once.

A chronograph tells the whole story

You knew I was going to recommend one of these, didn’t you? If not, you’re a new reader of this blog. This is one of the most important diagnostic tools an airgunner can own. A chronograph tells you how fast the pellets are traveling when they leave the muzzle of your airgun.

For many years, I used the Oehler 35P printing chronograph. I was raised in a time when Oehler chronographs were the most accurate instruments money could buy, and writers had to have one to be taken seriously. Then, I started writing this blog, and my chronographing needs increased tenfold! When I did a test of the Shooting Chrony chronograph, I was impressed with how convenient it is. I now keep one set up in my office permanently, which is where all my indoor velocity data is gathered. The price is right, and the unit is small, rugged and easy to transport. And it has an anchor point to mount it to a camera tripod.

If you want a choice, I’ve read good reports about the Competition Electronics Pro Chrono Digital Chronograph. The Bianchi Cup uses it, and that’s a big-time firearm competition! It doesn’t cost much more than the Shooting Chrony, so you have two good instruments to choose from.

Other things?

Is there more? Of course, but these are the essentials. The chronograph you can live without for a little while, but the other things you really should get right away.

Summary

Buying your first PCP airgun always seems to be a leap of faith. You’re going where you’ve never been, to places others have warned you to avoid. You’ve done the research, but you still wonder if the good stories aren’t all just part of a grand scheme to hoodwink you.

I’ve told you about all the things I think you absolutely need when you get a PCP. Pyramyd AIR has coined a new word for them — PCP accessories. Obviously you don’t need 2 chronographs or 2 different silicone oils, but you’ll eventually need one of each if you’re going to enjoy your new precharged airgun to its fullest.

“Need to consider”

Things you “will also purchase”…

I have used plumbers tape for years. I recently built a fuel injected turbo charged nitrous oxide motorcycle.

Using dozens of threaded hose fittings. More than one top builders warned me not to use plumbers tape. They said the first couple threads would shred the tape and little strings would possibly go into the fuel system. When I took some things apart I did notice these strings. I never had a problem. But I only use the liquid thread sealant. It’s white and works the same as the tape . It even has a slight glue like property when it dries.

Plumbers have been using pipe dope since before anyone ever heard of DuPont and it does work for threaded connections very well while remaining pliable, aiding disassembly.

Reb

Don’t get a plumber to work on your air gun then. In a water pipe the things down stream are large enough to allow a stray string to pass. In an air gun the strings could clog a valve or cause it to leak.

Since the tape is normally applied to the nipple end, I’d have a hard time believing any “strings” would form that could be sucked into the system. By the time you get the threads cutting the tape, the nipple should be moving deeper in than the tape is moving, pinching the tape into a form-fitting gasket.

Now… Removing the fittings may leave “strings”, but one should be cleaning all that stuff off before putting the stuff back together.

I sort of like the fill in the blank aspect.

Kinda like “Things you’ll forget”

I’m trying to test the new features but my guns are all sold out.

It looks like a quick reference for tank volume would come in real handy.

Reb,

People don’t understand what tank volume means. For example, does an 88 cubic-foot carbon fiber tank really have 88 cubic feet inside? No! It holds 88 cubic feet of air at normal air pressure. People do not need to know tank volume — they need to know tank capacity, and Pyramyd AIR does tell you that.

B.B.

Wouldn’t capacity change as pressure increases or decreases, whereas volume would remain a constant?

Reb

Reb,

That is the difference.

B.B.

B.B./Tom (I’m transitioning from B.B. to Tom),

I simply have two Marauders and a Hill hand pump. Therefore, SCUBA (3000 psi) and SCBA (4500 psi) is all a bit of a mystery to me.

Are you saying that a 3000 psi SCUBA tank with a listed 88 cubic feet capacity holds as much unpressurized actual volume of air as an SCBA tank rated at 88 cubic feet at 4500 psi? (Presumably the carbon fiber wrapped SCBA tank would be quite smaller than the lower pressure aluminum or steel tank.) I do understand that if I fill my Marauders to 2600 psi, I will get precious few fills from even a large 3000 psi tank. Once it gets to the same pressure as the gun’s reservoir, the transfer of air stops.

I hope my question was not too terribly stupid.

Michael

Michael,

The 88 cubic-foot carbon fiber tank (that has an aluminum bladder under the carbon fiber) is smaller internally than the scuba tank, yet it holds more air — 88 cu. ft. compared to 80 cu, ft. because the air is compressed to a higher pressure.

When you fill airguns from these tanks, the differences in fills is amazing. The carbon fiber tank will give up to 9 times as many full (3000 psi) fills as the scuba tank, and up to 45 times as many partial fills.

B.B.

The tank is rated as the capacity at the rated fill pressure. The common 80cf tank “squeezes” the air of a room 4x4x5 feet (okay, a small closet) into a canister the size of a rolled up sleeping bag — at 3000PSI.

Carbon fiber tanks look smaller, but since they are filled to 4500PSI may hold the same, or more, actual air (and for PCPs, provide more full fills: a 3000PSI tank filling a 3000PSI PCP will now be less than 3000PSI on the second fill attempt; a 4500PSI may still be over 4400PSI on the second fill)

Another form of silicone grease that is easily attainable is silicone fishing rod and reel grease, it comes in a small tube like toothpaste and has a applicator tip like pellgun oil has and works very well on O-rings and seals that you can lube when disassembled.

Buldawg

Thanks for that little tidbit. Despite being in the “mountains”, we have a dive shop in the city where I get grease, but having some in a tube like that would be “reel” nice.

RR

I thought that the silicone lube in a tube would be reel nice too.

Buldawg

buldawg

I’m getting ready to mail you that tray in a minute when I leave for work.

And I burned my phone up last night. Seriously the the screen and everything was fading in and out in different places this morning. How long did we talk anyway? But I got me one of those new smart phones this morning after I got the kids to school. Now I got to get smart and learn how to work it. 🙂

Gunfun

We were on the phone for a little over 2 hours and my phone was down to 19 % so it was about to crash also. Was your phone an old flip phone as I had one of those until a year ago and it started doing the same thing so I got an IPhone 5 paired with my wife’s account and it was a definite learning curve to figure out how everything worked.

I think the biggest issue was I am used to windows and going to an IPhone which is Apple based is what caused the learning curve because the two systems Work way different.

I will let you know when I get the tray and how good it fits and works, if there is ever anything you need from me just ask because I really appreciate you making the tray. I also really enjoyed are talk last night and will do more in the future for sure.

Buldawg

buldawg

My old phone was flat touch screen. A Pantec hotshot. I could slide the screen but not expand it or make it smaller.And reception on the internet was not that great all the time.

This one I got is a Droid. I already like it much better. And I was lucky I didn’t loose all my contacts.

And they gave me a tracking number for your package. I will email you it tonight when I get home from work.

Gunfun

Sounds good and don’t work to hard today. Once you get used to using the new phone it will be easier as I have finally got used to mine although the only thing I don’t like is these Chinese phones don’t speak English very good when voice texting.

Buldawg

buldawg

I’m getting better with it. Learning to navigate is always a fun thing for me to learn. It seems to have a better camera than my other phone also. So maybe I can take some better pictures. Will have to see how that goes.

Gunfun

practice , practice , practice and it will eventually all be instinct just like shooting or staging a the tree. once you get it all figured out it will be well worth it. when I first got my IPhone it frustrated me all the time and slowly but surely I figured it out so now it is a piece of cake, and I can remember when I said I would never get a smart phone. But they still don’t speak English very good.

Got my power adjusters today and with the adjuster backed off to the lowest tension with the black spring it is right at about the same tension as if you just put the black spring with the stock end cap. So I will get it completely installed in my 22 tomorrow and see what kind of numbers it will make at 3k.

I have to drill and tap the top hole for the rear breech screw because the Prod holds the breech down with two screw at either side on the rear. I think now that the 1720t end cap may have been the correct one to have ordered but I don’t remember if it is an adjustable one or not and I had looked thru so many schematic’s to find the part numbers that when I saw the Prod one I just went with it. it will work once I drill the rear hold down hole and tap it, and then I just have to get the RAI adapter for a Prod as it is all ready threaded for the adapter in the end cap to put the tactical stock on it if I ever want it.

Buldawg

buldawg

The one from the 2300S would of bolted right in. I thought you saw that I posted it before.

I don’t have the number in front of me but I got it at home. Or go to the Crosman site and look up the Crosman 2300S drawing. Most all of the pistols that use Co2 cartridges can inter change the end caps.

I should be more clear. The pistols that take the Co2 cartridge in the main tube like the 2240. Even A Disco end cap will work. That’s what I have in my 2240 conversion. It has the threaded hole already in it for the degassing tool. I just screw a Allen bolt in it and put a little blunger in front of the striker spring. The bolt pushes on the plunger which pushes on the spring. Either way just though I would let you know.

Gunfun

I must have missed that post or it just did not register with me, but the Prod one fits I just have to add the one hole in the center on the top side so it no big deal and I am learning to look closer at the schematics also for the little details.

Hopefully the bracket for the forearms will be here by weeks end and I can get the 22 completed and I am going to get with my machine shop buddy and get the 177 barrel done this week so by next week they should both be done.

My 60C valve failed again today so the silicone fuel line is not resilient enough to hold up to the repeated slamming shut of the valve on the seat, so I am going to go back and try my crosman valve in it. When I got it put back together with the fuel line valve seal I also found that the foster fill fitting was leaking some around the threads and I stopped that with a little more tightening of the fitting so my crosman valve my not have been leaking after all. We will see when I get the valve changed back out. My never ending quest for the perfect performance from all my pellets guns keeps me busy. Only my Hatsan and the Firepower do not need any attention, but I have not tried to improve them yet either.

Buldawg

buldawg

I will see if I can email you that tracking number when I get home also.

Gunfuin

Sounds good. I am getting ready to go to bed because getting up at 5;30 this morning to put my grand son on the bus has caught up to me,

Talk to you tomorrow.

Buldawg

LOL! I understand what you are saying that there is no such thing as Teflon tape, however we just bought a new shower head for my mother-in-law and the instructions on the back of the package say that I will need a wrench and Teflon tape.

RR,

Yeah! When I researched this article I discovered that references to Teflon tape are turning Dupont inside-out! They have a campaign to educate the public that there is no such thing.

B.B.

BB

How does one ture DuPont inside out

Buldawg

buldawg,

That should be turning, not turing 🙂 I fixed it.

Edith

Edith

Sorry, I just couldn’t resist. I am feeling mischievous this AM

Buldawg

Though I wouldn’t be a bit surprise if there were some people at duPont that couldn’t pass a Turing test…

As for turning it inside out… Pudtno? (sounds a bit like someone spitting)

I always called and heard it called thread tape or seal tape. Im doing the multi pumps and 13xx modding as of late, I never took multis as serious as the deserved back when I last had an airmaster77, but they are cool. Some day this article will apply to me… someday…. still thinking carbine/bullpup talonp/fx-evanix, and still out of my wallets dreams, but Im happy going to a new level with pumps. Has anyone reduced the probe girth to increase airflow? I saw it being sold as a custom aftermarket mod part and seems like a good idea and something I can easily accomplish. Any other diy for the 13pc77 you guys suggest?

RifleDNA,

When I got my 3120 I was fascinated by it’s hollow bolt probe and the way it held the .22 round ball ammo inside it.When the bolt is turned and locked in place the bolt has a hole in the bottom that mates up with the transfer port, when the air is released it fills the hollow space directly behind the pellet. Nothing for the air to have to work it’s way around.I have read about pulling the magnet outta a BB repeater and epoxying in with a short length of music wire,but it sounds like a lotta work for little return. Both are ways to increase efficiency by reducing obstructions to airflow.Keep your eye out for a Crosman 101,140 or 1400. Good luck!

Reb

RDNA

Glad your messing with the pumpers. Them 1300 series guns can be made into a good light powerful and accurate air gun.

A longer barrel 18 + inch’s long (I think anyway), steel breech, Heavier striker spring and that opening the transfer port trick that buldawg came up with to use the refrigerator tubing.

All them things will add up to a nice little gun. Oh and you got to put a butt stock on the gun like the1399 stock or the AR adapter and stock that Dave at RAI sells.

RifleDNA,

Whatever became of your Airmaster? Mine’s livin’ the life! After I sealed up the breech block with shoe goo it’s putting out just over 10 fpe with 14 pumps.

Reb

RifledDNA and REB

The pumpers are very easy and good guns to hot rod and I have built a 2289 with the 18 inch barrel like Gunfun suggested and went in and cut two threads of the front half of the valve body and then used a dremel tool with a small aluminum bit on the rear half to remove about two of the threads in the back end of the body to make a larger air volume inside of the valve. to do the rear half take a screw that is a tight fit in the valve stem hole that has a slotted head and put it in the stem hole with a nut on the back side so you can tighten it up to make it in to an arbor that you can chuck in a drill to spin the valve body with the drill while having the drill clamp in a vise or some other way to keep the drill stationary and turn the drill on and lock it on with the trigger lock, then very carefully and slowly use the dremel tool with the barrel shaped aluminum bit to remove the last two threads on the inside of the valve body, you want the screws head that you using for the arbor to be bigger in dia, than the valve seat in the rear half of the valve is so you can not damage the valve seat with the dremel bit.

Then when you have that done you can go to a hardware store with the spring from inside the valve to get a spring the same diameter as that spring but with a much lighter strength , ( got one from Lowes that come 5 in a bag for about a buck and a half) it can be a little longer just as long as it has less tension than the stock spring.

Then either get an adjustable hammer spring rear cap for the tube or take the hammer spring with you and get one that is stiffer than the stock one.

What you are trying to accomplish is to make the hammer spring stronger than the valve spring so that the gun will not pump up unless it is cocked and allowing the valve spring to close and allow for it to be filled with compressed air and then when you fire it all the air you compressed by pumping it up will be fully released with no valve lock . Then I drill out the transfer ports in the valve and barrel to 11/64″ and while you are at the hardware store buy a foot of 1/4 OD refrigerator Ice maker plastic tubing which is 11/64 ID and slide it up on the smooth end of the 11/64 drill bit and use a tubing cutter to cut a piece about .050″ longer that the combined height of the metal transfer port bushing and rubber seal combined and put that in place of the metal bushing and rubber seal. It is not necessary to spot face the valve and barrel where the transfer port sit on the valve and barrel, but since I have a 1/4″ end mill bit and a drill press I spot face the valve and barrel about .050″ deep to help hold the tubing in place from blowing out the side. I have pumped my 2289 and 1400 to 25 pumps without any blown seals or valve lock issues and get very close to 950 fps with the 2289 and over 1000fps with my 1400 that has a new disco 24 inch barrel on it with a modern steel breech.

Do not do these mods if you do not have a steel breech as you will explode the plastic breech with the pressures you can develop at 15 + pumps.

The way to tell where the maximum number of pumps that your gun can be pumped up to is to pump it past the stated ten pumps by crosman by one pump increase past ten at a time and fire it, then recock and pull the trigger again and when you pull the trigger the second time and it still has some air left in the valve then that is the point of valve lock and just go one pump below that number for the max pumps your gun can be pumped and get full power from it.

My mods allow for it to be pumped to 25 pumps for sure and get full air release, it may handle more but I have never gone higher because by the time you get to 25 pumps you will be tired after four or five time at that many pumps, it does give you more power by just going to 15 over the stock ten.

Buldawg

Some guns cant’ handle the stress of being pumped over 15-20 times. Case in point,I took my 392 to 25 pumps while searching for valve lock or a second shot. I eventually got my second shot but only after a detonation of seal oil. Both shots were as loud as rimfires and buried .22 roundballs 1/2″ deep in a dried out telephone pole after penetrating an aluminum tag.I had to replace the exhaust valve, due to it’s seal rolling out of it’s channel and if you look real close you can tell the forearm is sprung from the metal bending while being overpumped , although I thought I was being careful it did take it’s toll.That’s what Mac’s steroid versions take care of is the weakest links.I’m very confident taking my Airmaster to 20 pumps but I’ don’t take my 392 over 14 anymore. I have considered revising the exhaust valve before resuming testing

Reb

Reb

Yes I realize some are not at the same strength as some others are and I do not pump my 1400 or what used to be my 2289 that is now a 2240 hi-pac to 25 pumps very often as it is just to much effort involved to get it that high. the most I normally go is 15 pumps.

Is your 392 a self cocker like the 140s and 1400s are or does it cock with the bolt. I probably should have mentioned that the use of a brass valve if one can be found is stronger also. My 2289 has the aluminum valve and I guess I am lucky that they have not detonated or blown seals.

My 1400 being a self cocker is such that the more you pump it the harder the trigger pull becomes because the sear is holding the hammer against the valve cap so the more pressure in the valve the more load the hammer and sear are having applied to them. And the 140s and 1400s are known to blow the quad seal out of the cap if over pumped but I am power hungry with air guns just as I am a speed demon with my bikes. You only live once so go for all the gusto you can and I believe in fate and that the good lord already has my day planned and waiting for me so that there is nothing I can do in my lifetime that will get me there until he is ready for me to be there.

I have always lived on the edge and don’t know any other way to do it because the adrenalin rush is the greatest high there is and I am addicted to it.

Buldawg

The 392 has to be cocked and everything except the pin of the valve and the seal is brass.

I’ve got my eye out for the same guns I told RifleDNA to watch for plus my 3120 if I ever get another pump arm for it.I love my pump guns!

Reb

Yea I am the original owner of my 1400 and have had it since 68, I did have to put a new barrel on it because I had shot it so much that there is no rifling left in the original barrel. I guess I have been lucky with mine not blowing up ,but like I said I do not pimp to 25 pumps very often and actually just did a few times to see what fps it would do. It is a bear to pump it up 25 time for sure.

There are a couple 1400s on gun broker right now so you may want to check it out and they weren’t real high yet , but that can change quick as you know.

Buldawg

Have you noticed the 600’s dropping recently?

Reb

No I have not as I am not a big pistol fan in pellet guns because with my arthritis it is hard to hold them steady enough to be anywhere consistent in accuracy.

I guess I like rifles and bench shooting because I can al least nit what I am aiming at most of the time.

buldawg

Remember me talking about the 600 as a base gun for what Ya’ll are doing with the 2240’s?Check this out!http://anotherairgunblog.blogspot.com/2009/07/crosman-600-bulk-fill-barrel-extension.html

Reb

Reb

Mow you are getting my interest in pistols going, I just got to finish all the project I have going right now and sell some unused stuff on ebay to fund more projects.

Sometimes being a pack rat does have its advantages as one mans junk is another mans treasure.

Buldawg

Thanks for the info guys, I picked up some seals today, a assortment of little ones that’ll fit the probe transfer etc to see where I can beef up the seals at. I am going to pull the bolt and thin the probe and look at the port but besides an additional seal or a bit of angling I don’t want to mess to much with it. Definitely longer barrel steel breech. I did add a flat headed bolt cut down to about 3/8ths to shim and spring guide on the hammer side, I figured a bit over 1/32nd shim to spring and the bolt adding weight to the hammer would give a little more kick and it sounded stronger right off. I’ll keep you updated. Need a chrony!!

RifledDNA

A chrony is definitely a must to see if what you do helps or hurts the performance. The addition of the bolt head under the hammer side of the spring will definitely help by adding tension to the spring, I am not so sure how much the added weight will make as it is not a spring powered gun where any extra weight does help with the velocity to a point and then it starts to damage components.

The two best and easiest mods you can do is to take the hammer and valve springs out and go to your local hardware store and find spring that are close to the same length and diameter as he stock ones. You want the one for the hammer to be stronger than the stock one( thicker diameter of wire size or slightly longer but not too much longer so you do not encounter coil bind when cocking) and the valve spring to be lighter (smaller wire diameter) but close to the same length as the stock one.

What you want to end up with is where when the gun is in the uncocked position it will not pump up because the hammer spring is holding the valve open against the valve spring pressure, which requires you to have to cock the gun to pump it up and will cause the full amount of air that you compressed into the valve to be exhausted when you pull the trigger and therby giving you the most fps and fpe per shot.

Buldawg

OK fine, here is a “stupid” question. Why can you not use silicone grease for sproingers? I know it is used as a sealant rather than a lubricant, but it does have lubricating qualities and would eliminate dieseling.

RR,

There are different grades of silicone oil (not silicon). One is very thin and suitable for sealing, only. Another is good for lubrication, but has a low flashpoint and cannot suffer the heat of a spring piston powerplant.

Door-hinge silicone oil from the hardware store is not correct for lubricating piston seals or PCPs.

B.B.

I have really enjoyed stripping the Molly from my compression tubes and internals, and relubing my R9 with krytox 205. The 105 and Ultimox 226 apparently work well too. No more dieseling!

My gun is for Vorteked. Running at 13.7 FPE with 7.9’s. After 300 shots my ES was 5fps over 10 shots. Now ,after 4000 shots, my ES is 4fps over 10 shots. The krytox 205 is great.

They must have trusted it pretty well before they qualified it for use with Oxygen equipment!

Pop’s SLR,

What’s Vorteked?

I installed Tom Gore’s after market spring and guide invention. The vortek kit. It is a wonderful diy home tune kit. Zero vibration, smooth recoil, and much cheaper than a professional tune. Also, you do not have to mail the gun anywhere.

Good job Pop! I just got my QB-36 back together but I was looking for ideas on how to do this with it.Thanks for sharing it!

Reb

And don’t forget about a heavy gauge backstop.Some of these will shoot threw a 2×4 with ease at 20 yards and beyond.7

Heck, a .22 Diana m54 can put a dent into the steel of a trap rated for .22LR, if it hits the front of the lead collector rather than the sloping deflector surface.

We’ve taken care of filling the air gun but how about the other end. Is my local dive shop going to be able to fill my tank? I may not use a large dive tank but something like the small Benjamin.

sumcatone ,

Welcome to the blog.

You are asking a question that was not addressed in today’s report. It does need to be considered, but it isn’t part of this topic.

Would you like me to discuss it, or do you know the answer?

B.B.

Thanks for your response B.B.

My previous reply did not take for some reason. I don’t know the answer but no need to address it now. It would make a good subject for a future blog. I have considered a small tank for my Disco but didn’t know if a dive shop would have whatever they need to fill it. Kind of a murky area for me.

sumcatone,

Now I fully understand what you are asking. And that is worth a report.

B.B.

BB and sumcatone

It would be wise to call the dive shops first about filling your tank because the one shop in my area will not fill my tank unless I provide a current diver safety card, even if I am willing to sign a waiver. I get mine filled at my local fire extinguisher shop only because I know the owner and I work on his boats and four wheelers.

Some dive shops fill without card and some will not.

Buldawg

Edith

How do I go about putting an avatar next to my posts. I have a pic for my desktop background I would like to use as my avatar.

Buldawg

buldawg,

Go here to upload it:

http://en.gravatar.com

Edith

Edith

Do I have to create an account with word press first. I tried to sign with my user name and password for this blog and it does not recognize it .

Buldawg

buldawg,

Read the directions on this page:

http://en.gravatar.com/support/wordpress-accounts/

Edith

Edith

I have created an account in word press and have created an avatar but do not know how to upload to this blog. The instructions on the page link you gave me did not help or I am a dummy and cannot figure out how to get the right instructions.

Buldawg

buldawg,

The email address you use on your gravatar account and this blog must match. Do they?

Edith

I haven’t logged into my gravatar account in many years. I just now entered my blog email address and my gravatar pw. The pw for the blog and gravatar are not the same. So, you can use whatever you want, and it also means I have 2 accounts with the same email address: one on gravatar & one on the blog.

After I was logged in, gravatar asked me if it could link to my wordpress account. I gave it permission, which took me to a page where I saw my current avatar and an option to replace it.

This same scenario should work for you.

Edith

Edith

my PW is the same for both this blog and the gravatar account, but it never asked me for permission to link to my wordpress account.

Buldawg

Edith and Reb

It appears I got it figured out as I now see my avatar on my posts. Way cool and thanks for the help.

I just went back into my gravatar account and talked real stern to it and it worked.

Thanks

Buldawg

That shaking finger has it’s way of getting stuff done don’t it?

Reb

Works every time.

Got your 36 together yet.

Buldawg

test to see if my gravatar is posted

Success!!

Edith

Yes they are the same email address

Buldawg

buldawg,

I’ve reread this thread. I think what’s missing is that you need to create a separate account on gravatar. Apparently, you can’t just sign in with your wordpress account. So, create a new account on gravatar and use the same email address as you use for the blog. You can use the same pw, if you like.

After you’ve created that account, you should get a page at one point that asks about your wordpress account and connecting the avatar to it.

Edith

Edith

I think that is what I did as I logged back into the gravatar account and hit the confirm button for my gravatar and it came up with a screen showing wordpress and another icon, I just clicked on the word press and it took it from there.

Thanks for the help.

Buldawg

I had to create a file for my avatars then find them through the link on the site.

Reb

Reb

Yea I did not do that I don’t think, but as you see I got it to work. That’s my problem I can fix any computer problem with a car but know just enough about a PC to get myself into trouble.

Buldawg

BB, re Teflon tape, many people have been calling DUCT tape Duck tape . The tape industry has given in , and I can now buy DUCT tape labeled DUCK tape. Thanks to our public education system, most people know what a duck is, but have no idea of what a duct is. Ed

ed,

That ship sailed long ago. When I was a kid we used the terms on the left:

iced tea –> ice tea

iced cream –> ice cream

skimmed milk –> skim milk

Today, everyone uses the terms on the right.

I can’t tell you how many people think a reservoir is a “resolver.” Or think that all airguns are BB guns — even the ones that shoot pellets. Correcting them is useless. They look at me like I’m crazy. I may be crazy, but not about this. My list of these types of grievances is quite long. I’ll end this reply now before I go off the deep end.

Edith

Ed,

Don’t get me started!

Ice cream used to be iced cream and ice tea was iced tea even more recently (in my lifetime).

As writers, etymology is one of Edith’s and my passions, and even we have our blind spots (except, accept).

And woe to the reader who dares tell me that etymology is the study of insects! 😉

B.B.

B.B.,

Etymology.

Seems we could be heading for a blog on Latin. If so, can we get a few days warning. Please.

kevin

Kevin,

I’ll make sure that doesn’t happen. Ever.

Edith

Edith,

Bless you.

kevin

kevin

A course in latin and greek derivatives was one of my favorite high school classes.

My Spanish teacher made sure we knew what all the root words were and where they came from so we’d be able to use them in other Latin based languages such as Italian or French.I notice commonalities in different languages all the time due to this training but still speak only English.

Reb

You otta try German as they scramble the words all over the place, We had to have a foreign language to graduate high school. I took German because the frauline that taught the class was in her early twenties and very good looking so I figured it would be easier to learn a foreign language if the teacher was young and pretty, well I did pass the class with a d+ because to learn German you have to know the English language very well first and since I was still grasping verbs, adverbs ,noun’s and so forth German was totally backwards in how the sentence structure was laid out. I did learn how to count and cuss in German though only because I made the teacher do mad at me that she would send me to the office and chew me out in German and it gave the desire to know what she was saying to me when she was chewing me out in German and the number of times I got sent to the office.

Buldawg

My class started at 1:00 and it was hard to get back to schooling after lunch so I got in trouble a few times. She’d make me sit at her desk!

Reb

So you were the teachers pet huh. Yea I tried to have sit by here desk but she did not do it that way so I just went to the office and got my licks and went back to class.

Buldawg

Now Ms.Mehaffey was my English teacher and she was Hot! One of her days off she came up to get something outta her desk( Right,she knew what she was doing!) in a parka and a pair of Jordache. Still a fond memory!

Reb

I know just what you mean as the young frauline teacher would wear some low but tops and short skirts and would bend over when helping to explain the sentence structures and why do you think I was sent to the office so much. My eyes were not on the text book.

Buldawg

Doyle: A Scandal in Bohemia

Wulfraed

You lost me at the first few words of the quote, but then I was lost in the German class all the time to . Reading and English grammar have never been my strong suits, so German is way down on the list other than counting and cussing. I have forgot most of the cuss words but the numbers are still floating around in my head and sometimes I grab hold of them and count in German because you cannot mix up numbers no matter what language it is.

That quote is exactly why I only got a d+ in German.

Buldawg

B.B./Tom,

Now we are in one of my realms of knowledge. The etymology of Entomology is the Old Greek entomos, for segmented, and logia, for the study of.

Regarding “Iced Cream” and “Iced Tea,” that evolution of terms is likely actually an evolution of PRONUCIATION. Placing a “t” and even hearing it (when someone else says it) after that particular syllable is somewhat difficult. Over time, the pronunciation evolves through a mispronunciation category linguists call “”deletion” or “omission.” Over time, if the language is living, such as English, as opposed to dead, for example Latin, the formal, “legitimate” pronunciation changes.

My students will often make such errors based on hearing a phrase but not often reading it. For example, “Taken for granted” becomes in their papers, “Taken for granite,” and “Would’ve,” the contraction of “Would have” becomes “Would of.” This is because young people do not read very much.

Confusing “except” and “accept” is not a mispronunciation, of course. It is a lexical selection error akin to “too-to-two,” “effect-affect,” “your-you’re,” “further-farther,” and even “fewer” and “less.” These are errors of word choice. Accept is an entirely different word than except. These, too, evolve, however. Most of my colleagues and I have come to ignore the lexical selection errors of “May” and “Can,” “May” and “Might,” and “Shall” and “Will.”

And yes, we still get a chuckle whenever someone writes or says that a convict was “hung.”

Hey, any word on an Umarex CO2 powered, pellet-shooting M1 Carbine?

Michael

Michael,

No word on the Carbine, but I think there is movement on the SAA.

B.B.

B.B./Tom,

SAA. Hmmm. Shakespeare Association of America? LOL. I know what it is, it just, well, nevermind.

Michael

Michael,

I am so glad to learn of your knowledge of language and words.

Now, a question I have pondered for the past 50 years. When Shakespeare referred to releasing the ” … dogs of war.” I always thought he meant releasing the mechanical ratchet pawl that stopped the windlass or capstan (of war) from turning. Letting them loose would be akin to releasing the lock on a windlass under extreme tension. War would explode free!

Then I heard people who think he literally meant dogs, as in canines.

Do you know what he meant?

B.B.

B.B./Tom

Ah, from Marc Antony’s great speech in Julius Caesar. Dogs are not meant literally, probably, but rather are chosen for their ferocity and obedience as a metaphor for the footsoldiers to be “let slip,” (i.e. taken off their leashes) to wreak “Havoc” on Brutus and Cassius, the treasonists who killed Ceasar and (literally) bathed their hands in his blood.

Hounds/dogs also long have had connotations of hell, tenacity, and the like. Also, Shakespeare based much of Julius Caesar on an earlier work by Plutarch called Life of Marcus Brutus. In that Plutarch refers to “dogs of war” as well. Therefore, Shakespeare’s metaphor is also a literary allusion to Plutarch.

Shifting from 400 years ago to more recently, an elite group of Cheyenne braves, essentially Special Forces, were called dog soldiers, and the novelist Robert Stone wrote a 1970s book called Dog Soldiers set in Vietnam. It was later adapted into a film (my other area of formal expertise) called Who’ll Stop the Rain, starring Nick Nolte, Tuesday Weld, and Michael Moriarty.

Intertextuality is infinite!

Michael

Michael,

Thank you for such a complete answer.

B.B.

B.B.

I can relate to what you are saying. A long time ago I got tired of hearing people say “anxious” when they really mean “eager”.

Charles,

Yep. Eager = ardent, desirous of. Anxious means with anxiety, nervous anticipation.

Michael

Sounds like there may…or may not…be a bug in the system. 😉

The rest of the story:

The first cloth adhesive tapes were made from duck which is a light weight canvas. Early derivatives were used by the military to seal ammunition cans and some watercraft and it was referred to as “duck tape” for various reasons. Only later was the name changed to reflect its use in HVAC ducting where it is now not even appropriate to use. What we call duct tape gets brittle and fails when used on ducting and has been replaced with metallic tapes.

Our use of the generic term “duct” allowed a tape company to trademark “duck tape” which had fallen out of use in favor of “duct” which is probably why it looks like they gave in.

blaine,

Well, I learned something from you today.

B.B.

Blaine,

It was quite an education for me when I learned the difference between cheap duct tape, name-brand duct tape, and true “gaffer’s tape,” which costs a LOT more than duct tape. Gaffer’s tape is strong, holds well, tears evenly, and it doesn’t break down.

Michael

Michael,

We’ve switched to Gorilla Tape. It’s duct tape+. It holds together our plastic recycling container through all sorts of weather and gross mishandling.

Edith

Edith,

I’m curious. What color is Gorilla tape? Gaffer’s tape is black as it is often used on cabling on, above, and around stages and would be a visual distraction to the audience if it were silver/gray.

I imagine Gorilla Tape is from the same company that markets Gorilla Glue, which my wife frequently uses on her various art projects. She likes it because while it is strong, it never seems to be absolutely, forever set, so that glued joints can be “coaxed” into being loose again if one needs to do that.

Perhaps I can use a quest for Gorilla Tape as an excuse to go to the hardware store! :^)

Michael

Michael,

Gorilla tape is black, and it is made by the Gorilla glue company.

Ediht

Edith,

Thanks very much! Gorilla Tape sounds like the gaffer’s tape I am familiar with. I’ll go out and buy some for sure now.

Michael

You forgot one other facet…

True gaffer’s tape also removes without residue (even less than painter’s masking tape, which sometimes lifts paint).

Well the name Duck tape is right. It was called Duck tape because it was used by the military to seal ammo box from water and moisture, hence the name duck tape.

J-F

Well it seems I should have scrolled farther down before answering…

J-F

“Duck Tape” is actually a BRAND of duct tape — and not (to my knowledge) a generic name yet… Whereas Bayer lost Aspirin, and I think Thermos may have lost that too… Of course, in the UK, Hoover has become a generic for vacuum cleaners.

… And most of those duct tape lookalikes have given up any pretext of being useful AS duct tape (the original aluminized material used to seal the joints in HVAC ducts, aluminized for the thermal response). I’ve got a bit left from a roll of Zebra Stripe tape (last used to block off most of the inlet duct in the basement — I don’t need the HVAC unit sucking in mostly cold basement air and not drawing air from the upstairs; so instead of a square foot opening I’ve left only the corners open).

What DuPont is trying to prevent is their trademarked name becoming public domain. Examples are Xerox instead of copy, or Jello instead of gelatin flavored dessert.

Fred DPRoNJ

Fred,

Don’t forget Kleenex vs. facial tissue! In the UK people rarely ever say “vacuuming.” They call it “Hoovering,” whether it is with a Hoover, Dyson, Dirt Devil, Eureka, or Kirby.

Michael

Hi BB,

Is the MOEN Silicone lubricant that you can in the plumbing department at Home Depot suitable?

($6.95 cndn for 6 g)

Vana2

Vana@,

Welcome to the blog. No idea on MOEN. I’d have to see the spec sheet to know.

We used a Dow-Corning product at AirForce.

B.B.

Vana2,

I use either plumber’s grade or auto parts silicone grease when installing new seals on my pump guns for initial rubber to metal lubrication.

Reb

Hi Reb,

Thanks for the input!

I couldn’t find a MSDS for the MOEN silicone lubricant but this produce is recommended for lubricating O-rings on water filtration systems. Will give it a try and post if I find there are any issues.

Vana2

Sorry for the off topic.

But does anyone knows who should I go to, to obtain a new rear sight for a air ruger mark I. The thing is just very fragile.

Regards,

Gerardo,

You looking for something like this?/product/crosman-lpa-mim-rear-sight-for-crosman-guns-with-a-steel-breech?a=4385 There are also a variety of peeps to choose from.

Reb

Found it, it is distributed by UMAREX USA, hopefully they can sell me one original sight.

Thanks

Gerardo,

I’m not familiar with the Air Ruger Mark I. Is that gun sold in the U.S.?

B.B.

It is

/product/ruger-mark-1-air-pistol?m=2689

I have all manner of airguns here. I have co2 guns, breakbarrels, sidelevers, underlevers, pcp guns. When i need to snap off a quick killing shot, I reach for my Ruger airhawk. If I got time to monkey with air tanks I reach for a pcp gun, any one will do. If I’m target shooting for fun but need accuracy I reach for my Baikal MP61. If I just want to make a target go away I grab my custom Drozd blackbird which is also a pcp gun set it to full auto 2000 rounds per minute, walk my point of aim up to the target and cut it in half. Every gun I have has a specific use. I found using pcp guns it’s much easier to keep an air tank than use a pump. Using a pump going over around 2500 psi gets a bit rough to do.

BB, I currently do not own any PCP airguns, but am looking into getting my first. (more than I like to spend for a “toy”) But I cannot decide which one to get. I want a lot of power and something bigger than a .177 or a .22, what would be your suggestion before I jump into a Dragon Claw???

Chris,

The AirForce Escape makes 97 foot-pounds in .25 caliber. Is that enough for you?

B.B.

Chris Wilhelm, if price is a concern and you still need all the extras, pump, adapters, scope?, etc., you can’t beat a Marauder in .25cal. I have a synthetic stock for my first and I’m glad I went that route to learn about pcp’s. Or you could go with B.B.’s suggestion or how about a Rainstorm 2 in .25 or 9mm, price is good, they get good reviews and there are parts available and some good tuners out there to tweak them. Also you can go high end with a Day state Air Ranger or Air Wolf that can be set up in many configurations,.. Oh my head hurts… There are many options…

Chris Wilhelm,I second BBs suggestion.I own a Airforce Escape in 25 cal.It is truly one of the most powerful 25 cals. out of the box on planet earth.It is DEADLY accurate! Its light weight and pretty darn cool looking at that.Put on a quality scope and there is nothing much that walks on four legs that lives in these states that it won’t take down with the proper head shot.You can learn anything here on Pyramid Air but reading reviews and watching airgun video’s and this blog to learn about these airguns.Have fun doing it because this is a growing sport and the technology is getting out of this world in airguns as we speak.

Chris Wilhelm,

Do not forget about used air guns. I swore I would never buy a used gun but I’ve bought two in the past year and they are every bit as good as new. Just make sure you buy from a reputable site.

G&G

And I did like when PA started showing the different things linked together to buy at one time. Sure helped take the guess work and researching out of the equation.

Buldawg,

By the way, nice picture! last night I rolled some of my skin into the finish so I steel wooled it out this morning and will be applying as many coats as the humidity will allow.A friend gave me a tin of .177 precision max pointed pellets I just looked the weight up on, 7.2 grains I wanna run through it so I’ll be getting back on it!

Reb

Reb

Thanks for the compliment on the avatar, Do you know where it came from, remember the movie Harley Davidson and the Marlboro man with Mickey Rourke and Don Johnson. The Harley that Don Johnson rode was a 1994 FXR with rear struts in place of the shocks, the rear fender cut off right behind the seat and the gas tank was bare steel with just clear coat paint on it and it was made by the out of business motorcycle company Black Death Motorcycles at that is their company logo.

Glad you are getting the 36 done and let me know when it is back up and shooting.

Buldawg

I’d never heard of that movie before but I’ll keep an eye out for it.Thanks!

B.B., slightly off topic, I’m trying to find info right now on Ballistoil, would this be OK to use on my Beeman 97k blue laminate stock? I would think this would be fine seeing that the laminate is sealed with a lacquer?

Ricka,

Ballistol will have no effect on your rifle’s stock. The finish is sealed.

B.B.

Edith,

On the info sheet for the new Colt Python Revolver it states that the gun is also available with wood grips but I do not see them listed as an accessory or option anywhere. Is it available with wood grips? Thanks.

G&G

G&G,

My bad. We originally thought they were wood before we got the product in stock. They’re plastic grips made to look like wood. I’ve corrected it in the system.

Thanks,

Edith

Edith,

Thanks for getting back to me,but, darn. It would be beautiful with wood grips. Do you happen to know off hand if anyone makes wood grips that would fit?

G&G

G&G,

I have no idea.

Edith

Edith, BB- I must have missed the ship, when it sailed. You should see the look on some peoples faces when I tell them that I am a rabologist! It is a collector of walking sticks. Each time that I went to Africa, my guide sent me a want list and several rolls of duct tape were always on it. It was hard to get (in Zimbabwae) and my guide was very creative in using it to make repairs. His binocular was held together with it, so I brought him a good binocular.

zimbabwae ed,

My wife has a cousin who is a horologist. He is an expert clock/watch maker and repairer. That term usually raises a few eyebrows. By the way, he occasionally shows up on the Antiques Road Show as one of their experts.

G&G

I had one teacher whose paddle was a baseball bat with the sides shaved down and another that named his TOYOTA(you asked for it, you got it), And the tennis coach AKA my Algebra teacher was the worst!

Reb

I need to move some stuff myself.It’s just tough to figure out what someone else considers a treasure.

I’m glad you saw that before the blog refreshed! I knew you’d like it.I wonder if seals would need upgrading in order to hold up under HPA pressures? I’m following your leads. Carry on.

Reb

Reb

That would probably be a trial and error process as I doubt that there are any seal kit available for the 600 specifically for use with HPA and not knowing what the valve assy even looks like I cannot even guess if some of the newer seals would work.

I never realized the 600 was a repeater so that has also peaked my interest, but it would definitely have to shoot faster than 400 fps or so, I would want it to be capable of up to at least some where in the high 5 to low 600 fps range to be acceptable for me to spend the time and money to build and modify.

Buldawg

It’s one of the guns I’ve been reading everything I can dig up on it for a couple months now, almost ever since I got outta the hospital.

Reb

Once I get my other project done I will be looking into the 600 some more for sure.

I had a bad day today with my tinnitus being the worst it has ever been and kept me in bed until a couple hour’s ago. I just wish I had a volume control to turn it off.

The weather has been raining and sunny and just plain unpredictable and I don’t know if that had anything to do with it but I can at least bear the ringing mow and be able to think clearly now.

Keep me informed about what more you come up with on the 600s.

Buldawg

Well, my latest findings make me wonder if the loader can handle the extra stress of the higher pressures or if the port would need a restrictor. I was glad to see the barrel was that easy of a swap and I was sure some bolt -on would to allow bulking, but it sounds like you may be right about getting spare parts. It may be a project reserved to those with milling equipment. I don’t even have a 110 drill anymore and my Versa pack set needs a set of batteries.I’m gonna go nuts if I don’t get my hunting/fishing license soon!

Reb

I have not done any research on parts availability for the 600, but that would most likely be the Achilles heal of trying to do that project. The link you gave for the conversion was done back in 09 so parts may be a problem.

I have limited machine tools myself which amount to a drill press and grinder and I just learn to improvise as best I can and have bought some machine end mills and center drills to be able to do some of the simpler operations here at home. I have used my drill press as a milling machine and had the bit hang up in the work piece and bend the drill press’s shaft and luckily the chuck did not fly off and hurt me because it is just a swedged fit on the shaft and has no bolt or screw holding it on. I drill press is not meant to be used as a mill. the more intricate jobs that I do I go to my friends with the machine shop at his house.

Buldawg

buldawg

I sent you a email with that tracking number. Did you get it?

Gunfun

I did get the email, I have just got a chance to finally get on the PC to check messages and all. I was feeling bad this AM and had to stay in bed most of the day as my Tinnitus (ringing in the ears) was really extreme today and about drove me nuts until a couple hours ago when it finally has subsided to a bearable level. it has been raining and varying barometric pressures for the last two days and I don’t know if it effects my tinnitus or not but I just wish I had volume control in my head that I could turn down at times.

I will check out the tracking info, I just wanted to let you know I got the email and will be waiting for the tray to get here and let you know as soon as it does.

This has been the worst that my tinnitus has ever been and I would rate it about like a severe migraine, I can’t say for sure as I don’t get headaches unless they were self induced and since I don’t drink any more that is not an issue, but the tinnitus has got to be just as bad because it like having a wailing high pitch squeal in the middle of your head that you cannot escape from or turn off.

Talk to you later and will let you know when it gets here.

Buldawg

buldawg

Some of the people have that at work. I have it slightly. But nothing like you have. I hope mine don’t get worse.

But let me know when you get the tray. Talk to you later.

Gunfun

My doc has given me some medicine to take when it first started about a tear ago and he said I had an infection in my ears. So I took the meds and it helped for awhile but did not get rid of it completely and I do not have any infections any more as I see my doc every month and I am just going to have to live with it, it has never been as bad as it was this morning though.

I hope yours does not get worse, if you do not wear hearing protection at work around the noisy machinery you are working with you need to start. That is what my doc said most likely caused mine was working in a environment of loud noises without using proper hearing protection. I never knew any better 30 years ago that what long term exposure to the normal noise in a service department would cause as we get older.

Be safe and I will let you know when I get the tray and how it fits.

Buldawg

buldawg

I do wear ear plugs about 90% of the time. So maybe that helped me out.

And I might try to make me a tray for my Hatsan tomorrow. Pretty well got myself caught up. Going to try to make it at lunch tomorrow night. Will see how that goes.

Gunfun

I wish I would have known the damage that not wearing ear plugs when working around loud noises for long times would have done years ago. When at Harley we had to wear ear plugs when dyno testing and in the test stands or any other areas with loud machinery or equipment running. But by then I think the damage had already been pretty well done and of course my loud exhaust from my hot rods and bike did not help, both my Harley and kawi are non muffled open exhausts, and the kawi is a very loud high pitched scream at 10 K rpms.

Hoped you get your tray made by the time I get mine so we can test at the same times and compare results.

I got an email from the GTA today about a guy that is making bullets for 25 cal air guns that are swaged not cast and sells 37gr spitzer hollow points for 22 bucks per 100 + 6 for shipping or 45gr spitzer HPs for 25+6 per 100. They won’t do me any good because I don’t have a 25 cal but I thought you may be interested because they are more like a bullet than a pellet. here is the link.

http://www.ratsniperslugs.com/home.html

Talk to you later,

Buldawg

buldawg

BB did a blog on swaging bullet/ammo/pellets a while back. I had considered making a swage set in the past. I have reconsidered that and I’m not. Its hard enough right now to find a good pellet that fits a particular gun with all the choices out there.

Unless hes got a good process down I think swaging would be a difficult thing to consistently repeat. I think I’m just going to stick with my tryed and true pellets for now. I finally got the pellets that my guns like figured out and the right scopes and sighted in the way I like. So now what I’m going to try to do is just enjoy some shooting time. Time for a little break for me. Its taken a while to get mine where they are at right now but I’m finally satisfied.

What is heavy in my mind right now is that .25 cal. 2240 conversion. I have been working 12 hr. days and around 10 to 12 hrs. of overtime every week for a little over a month now. So my next project will be the .25 cal. 2240 conversion air gun. All my extra money is going that way real soon. About another week and I will order the breech and shroud set up that Diabaloslinger provided the link for.

Yep 2240, .25 cal. pellet chuck’n for me pretty soon. 🙂

Gunfun

A 25 cal 2240 conversion will be a nice light weight powerhouse. I just wonder haw many shots you would get with the setup. I guess I missed the link Diaboloslinger sent you in the blog, is it in this blog on what you need for a for a pcp.

That would be real cool. I don’t know what quality his pellets are but he has been endorsed by the GTA forums moderators as making a good product so I just thought I would let you know. I do under stand that when you finally find the right combo of pellet and gun its hard to start to experiment all over again. I am still in the testing stages and trying to find the pellets all my guns like so I understand that if it ain’t broke don’t fix it.

Talk to you later and will let you know when my tray gets here tomorrow.

Buldawg

Thanks for the information. Out of the different air sources, which one is recommended/needed? I see air compressors, air tanks, and small air pumps. Would any of those work for PCP guns? In the case of an air tank, where do can you fill it up?

Thank you.

AHMSA,

These air sources are recommended for different uses. We first need to know how you want to use the air before we can recommend one source over another.

For example, for a big bore airgun a carbon fiber tank would be the best and a hand pump would be the least appropriate. That’s because big bore guns use lots of air.

But for a precharged gun that operates on 1800 psi air, a hand pump is probably the best. A carbon fiber tank will work, but it is overkill.

B.B.

bb thank you very much for mentioning plumbers tape.. so many people complain about their gun not taking air when they use a hand pump and fill probe.. 99% of the time i just ask did you use plumbers tape on the threads before your screwed your probe in.. and the overwhelming response is no i wasnt told too.. a 99 cent fix to a $50 problem if you were to send your gun back and forth for a repair..

I’ve got a major yote problem. Live within city limits. I assume air guns aren’t firearms in my city. Hired a trapper- what a waste of $900. Time to take matters into my own hands. I think I need a Benjamin Marauder .25 cal. I’ll be staking out my prey in a blind- maybe a 40 yd shot. But more likely from my vehicle. The yotes are seen at night and at dawn mostly. Lots of questions:

1) what do I need in the way of optics for this mission? Laser, some kind of night vision setup? Scope?

2) what’s the diff betw an air fill and a CO2 fill? Ive got CO2 tanks on hand for my kegerator, so…

3) what attachments do I need to fill it?

4) should I get a Call? If so, what kind?

Thanks for your help!

yotefreein73,

Welcome to the blog.

For the benefit of our foreign readers, let’s explain that by “yote” you are referring to coyotes.

I think the .25 caliber Marauder is too little gun for a coyote. Would you hunt them with a .22 short? Well the .25 caliber Marauder is even less powerful.

I would recommend an AirForce Escape in .25 caliber at a minimum.

Just because airguns aren’t considered firearms in your community doesn’t mean it is legal to discharge one in the city/county limits. Check with the police and with the Fish and Game people in your area.

Hunting from cars is illegal in most states except for handicapped shooters.

CO2 is not a gas to use in a hunting airgun. It produces way less energy that compressed air, plus it is temperature dependent and will peter out at the very times you need it.

If it is legal to hunt coyotes with airguns after dark, then yes, night vision is recommended. It is expensive, but it enhances your shooting possibilities.

As far as calls go, they will make all the difference. If you don’t use one you are like a meteorite hunter looking up at the sky and waiting for a meteor to streak across.

The attachment situation can be resolved after you select the gun and fill method. I covered that four reports back in a report titled Things you need when you buy a PCP. Link to it at the top of this page.

Good luck!

B.B.

Thanks for your rapid feedback! Well I’m confused now. Hv seen a number of youtube videos of guys taking yotes and boar with a marauder. The gun you recommend is lighter and smaller- good, but I don’t like the single shot- loading those little pellets 1 x 1 ain’t no fun. Not thrilled with the higher price tag either. Conflicted.

Thanks again.

Yotefreein73,

You only see the videos where one shot seems to take down a coyote or boar. You don’t see all the videos where there isn’t a successful kill with one shot.

Several years ago, Gamo was flaunting their spring rifles as wild pig killers. The pigs were tethered, not shot from an expected hunting distance and the experienced hunter had to shoot the pigs repeatedly to kill them. The critters died a tortured, squealing death.

There was, however, one video where the shooter shot the pig one time in the temple and it died right away. If a person who hunts all the time and makes his living going on hunts can take down the critter with one shot only occasionally and only when the critter is tethered, you probably can’t expect to do the same thing with a Marauder while the animal is moving about and at further distances.

FWIW, Crosman does NOT recommend the Marauder for coyotes or boars.

One shot, one kill. Get a gun that’s made for the job. Please.

Edith

Ok, so which air rifle is best for a coyote and why is it better than a .25 marauder?

73,

I wouldn’t recommend ANY smallbore air rifles — since most are not as powerful; as a .22 short. I wouldn’t hunt coyotes with a .,22 short — would you?

I would select a .308 or possibly .357 big bore rifle for coyotes, if I hunted them with an air rifle.

B.B.

As it has been stated if you must hunt coyote with an air rifle i would go along with .357 as a minimum as a rule, but they do range in size by sex & region with a range in weight from 20-70lbs. The big question is in a city situation even if it is somehow legal is it safe and if more than one is visiting you are on a family territory and this brings into question how many are you dealing with and do you have the ability to kill more than one as i can state with some confidence even if you only see the one another one will likely witness the event. My point is buying a big bore PCP and all the extras to fight coyotes is going to be an expensive losing battle. I might as well give you my experience as i have a small poultry flock 50+ guineas and 26 chickens on 1200 acres in rural Kansas with surrounding ponds creeks and forest and yes plenty of coyotes & occasionally worse and though i have the firearms i have never shot at a coyote as i know i will never be enough to even dent the population. The good thing about coyote are that they are smart and therefore can be deterred. Where i am i just walk them down and if the come close at night i just whistle loud and shine a flashlight on them. My personal opinion is that your best bet at least as a first attempt should be a multi pump with pellets on the lowest power and if you are in a city use the house as a blind as windows and doors make great gun ports.

I have loved air rifles now for over 10 years. Have owned several and have shot in competition. Made it to the top 10 in BRV, using a custom rifle from Jolly Old England. Loved it all and everything about it, except the price. Then thinking about this country, the once great US of A and all the bad stuff and killings that are going on here every week, I thought, “why am I so interested in air rifles”? Sure I can shoot them in my back yard, but what kind of protection would an air rifle be if I needed it for any kind of protection? Good question.

I had best stop here. Have a good day.

DS

Ahem:

I thought “etymology” was the “bug infestation” arising from the history & origin of words!

Rich

Your blog and your personal replies have been very helpful for questions I have regarding my Benjamin Maximus .22. I just wanted to make sure I haven’t missed anything.

I got Crosman chamber oil to put in the fill Port occasionally before pumping and to lube the O-ring on the bolt. I also use some on the exterior moving surfaces of the hand pump.

I purchased FP-10 to lube the Crosman Premier pellets I’m using. I’m also using it to keep exterior metal parts from rusting, and on the metal to metal part of the bolt.

The only other O-ring visible is the female end of my pump, so I put a little silicone grease on it.

Do I need to take the gun out of the stock periodically to lube the trigger somehow? If so, would FP-10 work and just apply it where I can see metal contact?

Thanks

John,

The Maximus trigger can be made much lighter than the stock 5-6# factory pull. They are not hard to work on. 2 screws can be added. Both screws screw right in. 1 advances the first stage and the other is a trigger over travel stop. No drilling. 2 springs can be modified. As for lube, you are not going to do much without pulling the side plate off the trigger box. The rest of your ideas sound fine. I used a 3 types of lube, after pulling all of the parts and cleaning them. Silicone grease on plastic to plastic. White Lithium for plastic to metal and Moly for metal to metal.

If you are interested, hit me up on the (current) blog. If you are not comfortable getting inside the trigger, then just keep shooting it as is. It is not hard, but everyone that modifies anything on a stock air gun must also be able to accept the responsibility as well. Gunfun1 and I have both done similar trigger tunes on the Maximus.

I’d be interested in some instructions for tuning the trigger on the Maximus.

John,

Go to the (current) blog that started on Friday 2/23/18. Look at the (bottom of the comments) for my reply. Very few people will ever see your comments back here on a 2015 blog.

Johncpen,

FP-10 is a good lube for pellets, but I’m not sure how good it is at preventing rust. At present I think Ballistol leads the pack in rust prevention.

I do plan to test a new oil for rust prevention soon.

B.B.

That’s good to know. Do you know if Krytech works good for lubing pellets?

Johncpen,

No, I don’t.

B.B.